Chapter 28: Implement Guardrails and Escalation Paths¶

Overview¶

Pre-Deployment Testing: Run agents in shadow mode alongside humans. Compare decisions, measure error rates, analyze divergence.

Escalation Protocols: For actions above risk thresholds, agents must defer or require additional approvals.

Automated Policy Updates: Build systems that can inject new constraints or ethical guidelines without agent downtime.

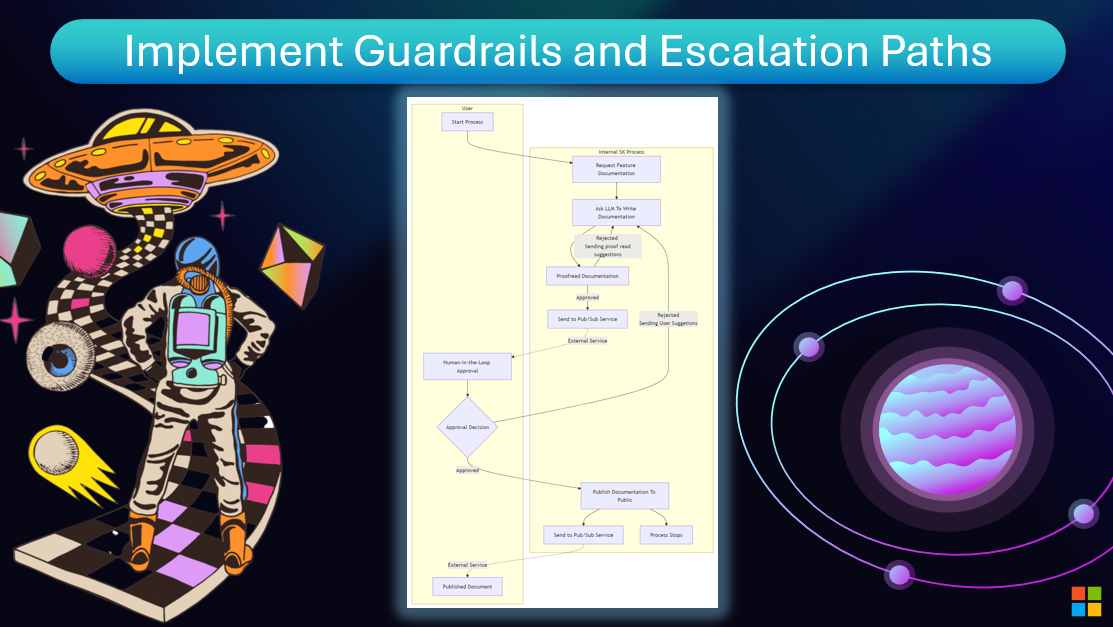

Human-in-the-Loop:

Another effective way to build trustworthy AI Agent systems is using a Human-in-the-loop. This creates a flow where users are able to provide feedback to the Agents during the run. Users essentially act as agents in a multi-agent system and by providing approval or termination of the running process.

Next Steps¶

Continue your learning journey:

Questions or feedback? Join the discussion on our GitHub repository or connect with the community.