Chapter 2: Responsibility isn’t a feature, it’s a foundation!¶

Overview¶

Every new technology raises the same questions: Is it reliable? Can it be trusted? But when that technology acts on behalf of humans, those questions shift from technical nuance to existential demand.

Trust is not just the currency of relationships; in the age of agentic AI, it's the bedrock of enterprise transformation.

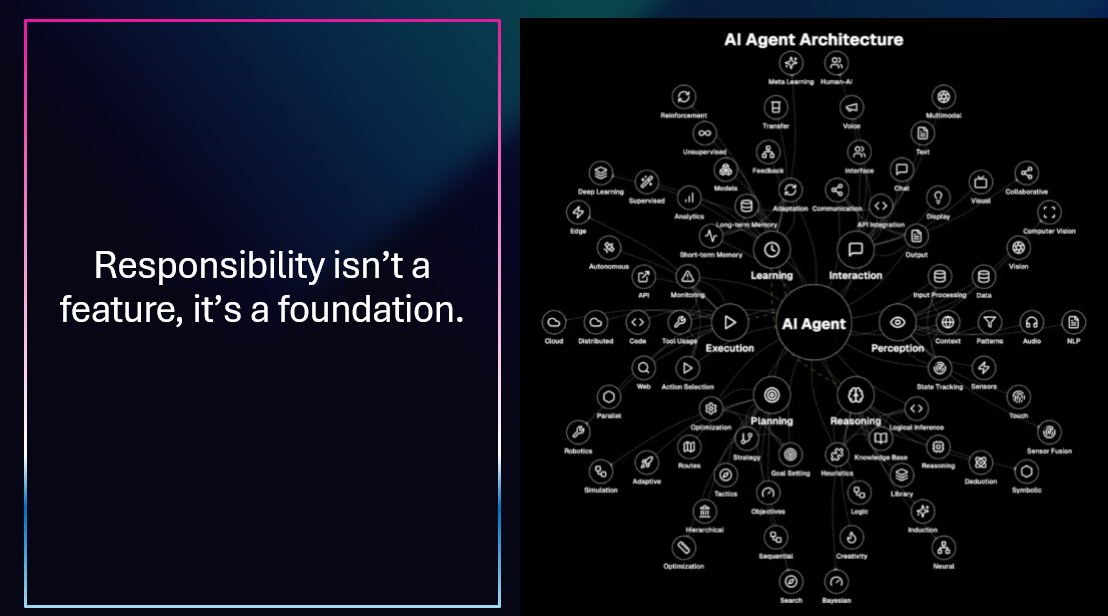

We are now at the inflection point where agents, software entities powered by AI that can reason, decide, and act are stepping into mission-critical enterprise workflows. These agents will surface insights, trigger actions, interface with customers, and in some cases, negotiate outcomes.

Building on Solid Ground¶

Responsibility isn't a feature you add at the end—it's the foundation you build on from the start:

- Architecture Matters: Design with observability, auditability, and safety constraints from day one

- Governance First: Establish policies before deployment, not after incidents

- Defense in Depth: Layer protections at model, safety system, application, and UX levels

- Continuous Validation: Trust is earned through ongoing measurement and improvement

Azure AI Foundry provides the foundation—from built-in content safety to evaluation frameworks—enabling you to build AI systems where responsibility is inherent, not bolted on. With GitHub Copilot and Azure tools working together, you can develop responsibly at the speed of innovation.

Learning Objectives¶

The leap from automation to autonomy magnifies the stakes.

So, how do we design agents that enterprises not only adopt but truly trust? What are the hard questions we must answer to move from technical promise to operational reality? And what does a practical path to implementation look like?

This is the trust problem in the agentic era, a challenge that, when solved, unlocks massive efficiency, scalability, and innovation.

Next Steps¶

Continue your learning journey:

Questions or feedback? Join the discussion on our GitHub repository or connect with the community.