Chapter 16: Manual evaluation for models and apps¶

Overview¶

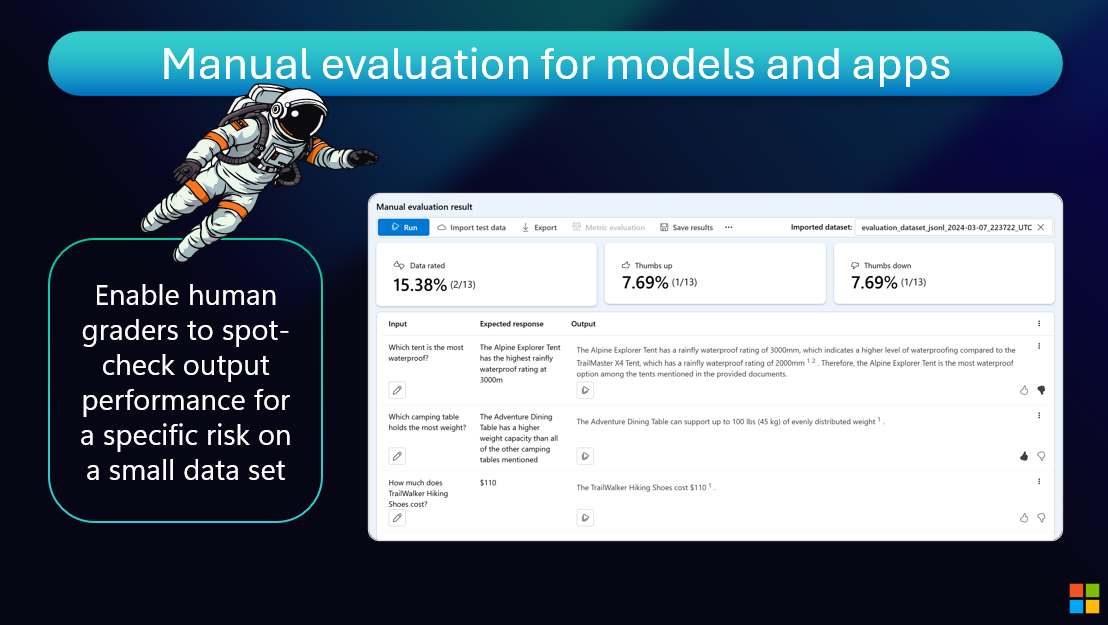

We recommend that you always start with manual evaluation, with human graders manually scoring generated outputs.

When mitigating specific risks, it's really helpful to keep manually checking progress against a small dataset until evidence of the risk is no longer observed before moving on to automated evaluation.

Azure AI Foundry provides an easy no-code interface for developers or domain experts to grade model outputs.

The Value of Manual Evaluation¶

Human judgment remains critical for nuanced quality assessment:

- Build Intuition: Understand failure modes before automating

- Catch Edge Cases: Identify issues automated metrics might miss

- Domain Expertise: Leverage subject matter expert knowledge

- Iterative Refinement: Quickly test mitigations on small datasets

- Benchmark Creation: Generate ground truth for automated metrics

Azure AI Foundry Manual Evaluation¶

Azure provides user-friendly tools for manual evaluation:

- No-Code Interface: Developers and domain experts can grade outputs without coding

- Annotation Workflows: Structured evaluation with custom criteria

- Team Collaboration: Multiple graders can assess the same outputs

- Export and Analysis: Results integrate with automated evaluation pipelines

Start manual, scale automated. Manual evaluation builds the understanding you need to design effective automated evaluation strategies.

Resources and Further Reading¶

Online Resources¶

- 🌐 Evaluate GenAI Applications

- 🌐 Evaluations in GitHub Actions

- 🌐 Evaluate generative AI models and applications by using Azure AI Foundry

Next Steps¶

Continue your learning journey:

Questions or feedback? Join the discussion on our GitHub repository or connect with the community.