Chapter 9: A Practical Guide to Operationalizing Trust in Agents¶

Overview¶

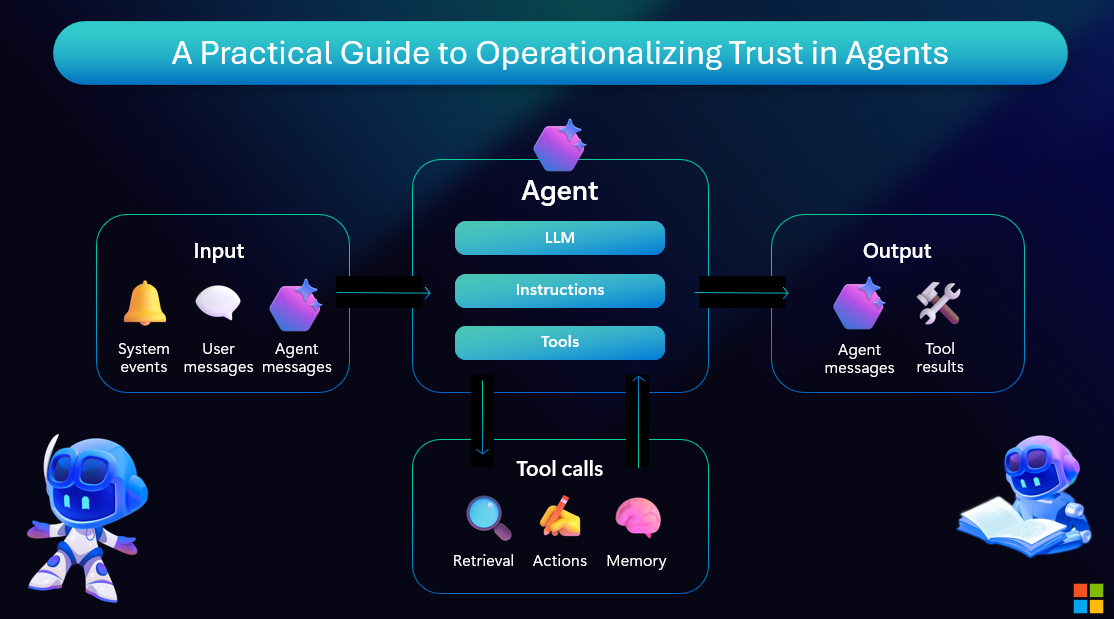

How does an enterprise move from aspiration to operational trust in agents? Here's a practical blueprint:

Practical Steps to Operationalize Trust¶

Moving from principles to practice requires concrete actions and tooling:

- Start with Use Case Definition: Clearly scope what the agent will and won't do

- Implement Evaluation Early: Use Azure AI Foundry's evaluation SDK from day one

- Build in Layers: Model → Safety System → Application Logic → User Experience

- Monitor Continuously: Instrument with Azure Monitor for real-time trust signals

- Iterate Based on Feedback: Establish feedback loops with users and stakeholders

Tools for Trustworthy Agents¶

Azure AI Foundry and GitHub provide end-to-end support for building trustworthy agents:

- Development: GitHub Copilot suggests secure, responsible code patterns

- Evaluation: Azure AI Evaluation SDK measures quality and safety metrics

- Deployment: Azure AI Foundry provides managed endpoints with built-in safety

- Monitoring: Application Insights tracks performance and user satisfaction

- Governance: Azure Policy enforces compliance and audit requirements

This isn't theory—it's the operational framework Microsoft uses internally and makes available through Azure AI Foundry. You can build agents that earn trust through design, measurement, and continuous improvement.

Resources and Further Reading¶

Online Resources¶

Next Steps¶

Continue your learning journey:

Questions or feedback? Join the discussion on our GitHub repository or connect with the community.